What is the Best CPU for a Local LLM?

The best CPU for running a Local Large Language Model (LLM) is one that balances high core/thread counts, fast clock speeds, and ample cache memory to handle the parallelizable and computationally intensive nature of neural network inference. While dedicated GPUs are often preferred for training, modern CPUs are highly capable for running smaller, quantized models (like 7B or 13B parameter models) efficiently. Key specifications to prioritize include a high number of performance cores (P-cores), support for fast DDR4 or DDR5 memory, and a robust thermal design to sustain workloads.

Key Technical Specifications for LLM CPUs

For optimal local LLM performance, focus on these CPU characteristics:

-

High Core/Thread Count: More physical cores and threads allow for better parallel processing of model layers and matrix operations. Aim for a minimum of 6 performance cores.

-

Large Cache Memory: A large L3 cache (e.g., 24MB or more) is critical as it reduces latency when accessing frequently used model weights and data.

-

Memory Support: Support for high-speed, dual-channel DDR4 or DDR5 RAM is essential. System RAM capacity is equally important; 16GB is a practical minimum, with 32GB or more recommended for larger models.

-

Thermal Design Power (TDP): A higher TDP (e.g., 45W-65W) typically indicates a CPU designed for sustained performance, which is necessary for extended inference sessions.

Use Cases and Applications

Local LLM deployment on CPU-based systems is ideal for several scenarios:

-

Privacy-Sensitive Environments: Processing data locally without sending it to the cloud, crucial for healthcare, legal, and financial applications.

-

Edge AI and IoT: Running lightweight AI assistants or analytical models on industrial computers in manufacturing, retail, or digital signage.

-

Development & Prototyping: A cost-effective platform for developers to test and iterate on AI applications before scaling to GPU clusters.

-

Always-Available Assistants: Deploying a dedicated, offline-capable chatbot or coding assistant on a compact fanless PC.

CPU Comparison for LLM Workloads

| Processor Tier | Ideal For | Key Attributes | Example Use Case |

|---|---|---|---|

| Entry-Level (e.g., Intel N-Series) | Smaller, heavily quantized models (3B-7B params) | Low power, fanless design, 4-8 efficient cores | Embedded kiosk AI, basic text summarization |

| Mid-Range (e.g., Intel Core i5/i7) | Mainstream 7B-13B parameter models | 6-10 P-cores, higher clock speeds, larger cache | Desktop AI coding assistants, local research chatbots |

| High-Performance (e.g., Intel Core i9) | Larger 13B+ models & multi-model workloads | 14+ cores, very high cache, high TDP for sustained loads | AI-powered workstations, advanced data analysis |

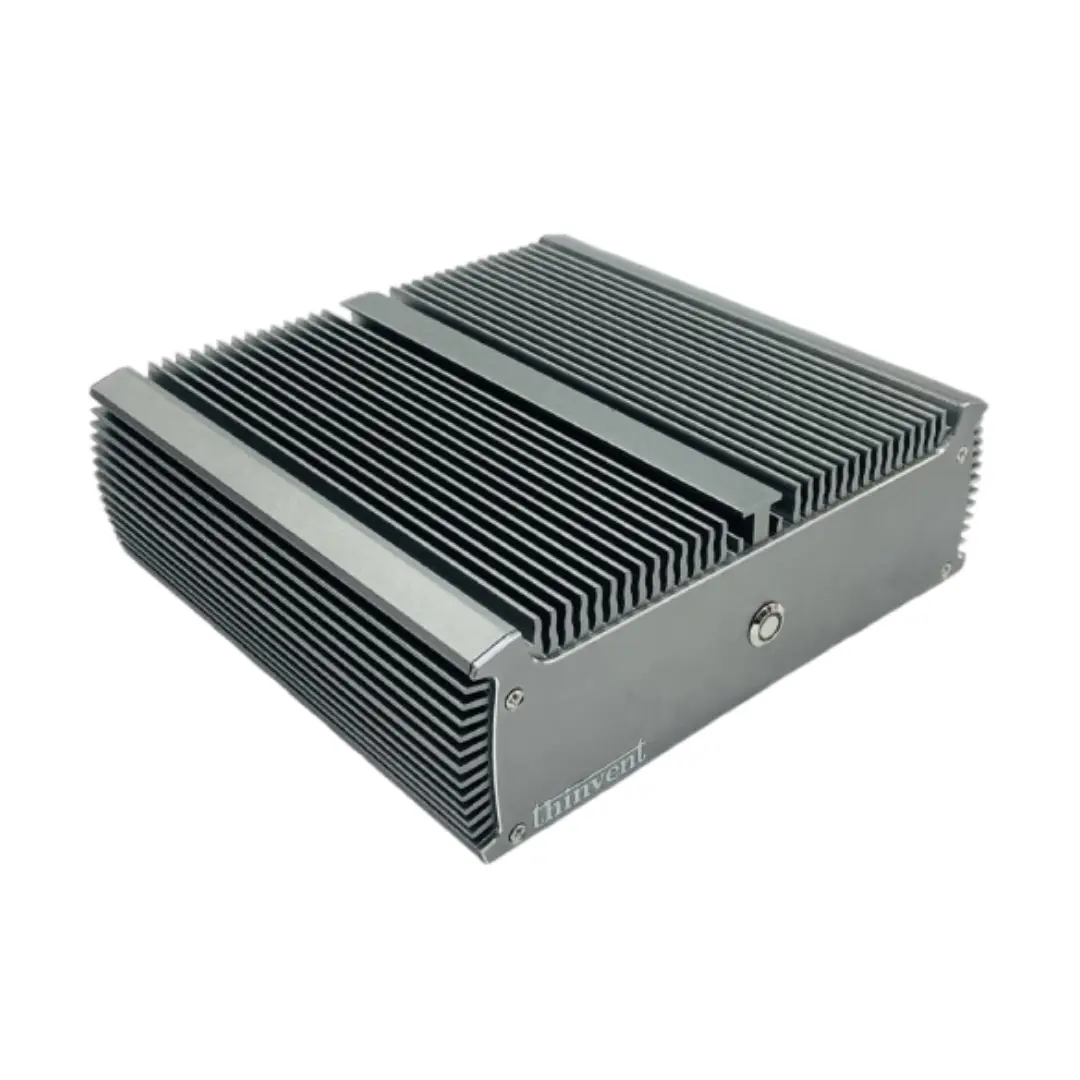

Thinvent Industrial PCs for Local AI

Thinvent's range of industrial computers provides robust, reliable hardware foundations for deploying local LLMs. Our systems are built for 24/7 operation with fanless designs for silent, dust-resistant performance in diverse environments. For CPU-intensive AI workloads, we recommend our systems featuring Intel Core i5, i7, and i9 processors from the 12th, 13th, and 14th generations. These units offer the high core counts, substantial L3 cache, and support for up to 64GB of fast DDR4/DDR5 memory required for smooth local inference. Coupled with high-speed NVMe SSD storage for quick model loading, Thinvent PCs are engineered to bring powerful, private AI capabilities to the edge.