A Local LLM PC is a desktop computer specifically configured to run Large Language Models (LLMs) and other generative AI models directly on the hardware, without relying on cloud services. This setup provides enhanced data privacy, lower latency, and predictable operational costs. The core requirements for such a system are a powerful multi-core processor, substantial RAM, and fast storage to handle the intensive computational and memory demands of AI workloads.

Key Specifications for Local LLM PCs

The performance of a local LLM system hinges on its ability to load large model files into memory and process them efficiently. Key technical specifications include:

-

Processor (CPU): A modern, multi-core CPU is essential. While dedicated AI accelerators (NPUs/GPUs) offer the best performance, capable x86 processors from Intel's Core i-series or newer N-series can run quantized and smaller models effectively. Core count and single-thread performance are both important.

-

Main Memory (RAM): This is often the primary bottleneck. LLMs are loaded into RAM for inference. A minimum of 16GB is required for smaller 7B parameter models, while 32GB or more is recommended for larger 13B+ parameter models to ensure smooth operation.

-

Storage (SSD): A fast NVMe SSD (512GB or larger) is crucial for quickly loading the multi-gigabyte model files from disk into RAM, significantly reducing startup and load times.

-

Operating System: Linux distributions like Ubuntu are often preferred for AI development due to their robust toolchain support and stability. Windows 11 is also viable, especially with the growing support for AI frameworks.

Use Cases and Applications

Running LLMs locally unlocks several professional and industrial applications:

-

Secure AI Development & Testing: Developers can build, fine-tune, and test AI applications in a controlled, offline environment, keeping proprietary code and data secure.

-

Private Data Analysis: Organizations in finance, healthcare, and legal sectors can process sensitive documents through LLMs for summarization, classification, and insight generation without data leaving their premises.

-

Edge AI and IoT: Deploying compact, fanless LLM PCs in field locations for real-time language processing, interactive kiosks, or intelligent monitoring systems where cloud connectivity is unreliable or undesirable.

-

Research and Education: Provides a cost-effective, dedicated platform for academic research into AI model behavior, prompt engineering, and algorithm development.

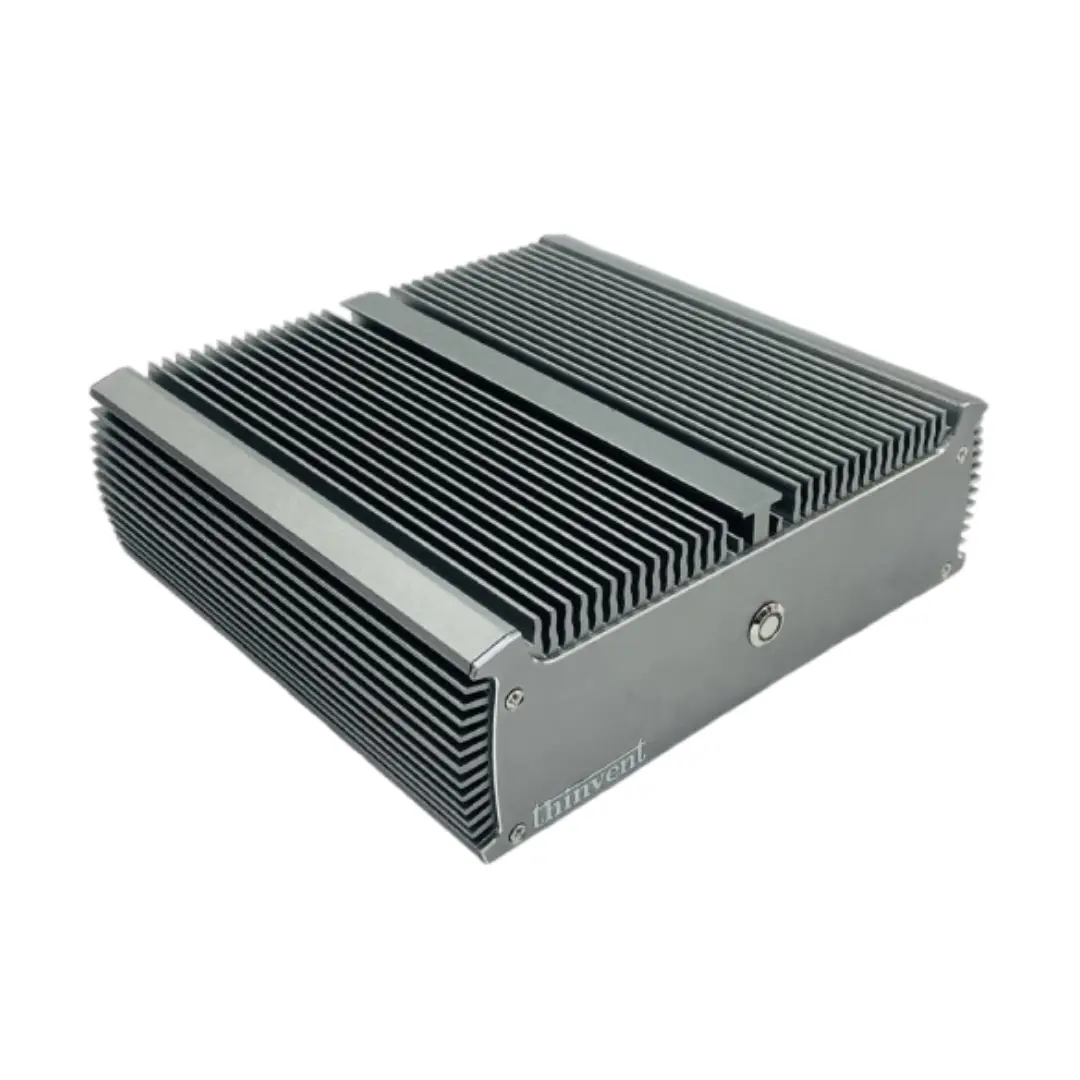

Thinvent PCs for Local LLM Workloads

Thinvent offers a range of industrial-grade computing solutions that provide the reliability and performance foundation for local AI inference. Our systems are built for 24/7 operation in diverse environments.

For Entry-Level & Efficient LLM Tasks: Our fanless Mini PCs equipped with modern Intel processors, 16GB+ of RAM, and fast SSDs offer a power-efficient and silent platform for running smaller, quantized LLMs. Their compact size makes them ideal for integrated edge AI solutions.

For Demanding AI Development: For users requiring more computational headroom, our high-performance Industrial PCs feature the latest Intel Core i5 and i7 processors, support for up to 64GB of RAM, and multiple high-speed storage options. This hardware is capable of handling more complex models and development workflows.

Thinvent computers provide the durable, stable hardware platform needed to explore and deploy local AI, ensuring your intelligent applications run reliably wherever they are needed.