What is the Best Computer to Run an LLM?

The best computer for running a Large Language Model (LLM) is a system with a powerful multi-core processor, substantial RAM, and fast storage. While dedicated GPUs are often recommended for training and high-demand inference, many modern, efficient LLMs can run effectively on high-performance CPUs, especially for local, private, or edge computing applications. The key is balancing raw computational power with memory bandwidth to handle the model's parameters and data processing requirements.

Key Specifications for LLM Performance

For optimal LLM operation, focus on these core components:

-

Processor (CPU): A modern, multi-core CPU is essential. Higher core and thread counts (e.g., 10, 12 cores) allow for better parallel processing of model layers and token generation. Newer processor generations (12th, 13th, 14th Gen Intel® Core™) offer significant improvements in instructions per cycle (IPC) and efficiency.

-

Main Memory (RAM): This is critical. LLMs are loaded into RAM during operation. For running models with 7B to 13B parameters, 16GB is a practical minimum, but 32GB or 64GB is recommended for smoother operation, larger context windows, or running multiple models.

-

Storage (SSD): A fast NVMe SSD (512GB or 1TB) drastically reduces model load times and improves overall system responsiveness when swapping data from storage to RAM.

-

Operating System: Linux distributions like Ubuntu are often preferred for development and deployment due to their stability, efficiency, and robust tooling for AI/ML workloads. Windows 11 Pro is also a viable platform, especially for user-friendly interfaces and certain frameworks.

Use Cases and Applications

Running LLMs on dedicated computers enables several key applications:

-

Private & Secure AI: Host models locally to ensure complete data privacy and security, avoiding cloud API dependencies.

-

Edge AI & IoT: Deploy compact, fanless industrial computers in field locations for real-time language processing in kiosks, digital signage, or automation systems.

-

Development & Testing: Use a powerful workstation for prototyping, fine-tuning, and testing LLM applications before wider deployment.

-

Cost-Effective Inference: For specific, optimized models, a high-spec CPU-based system can provide a reliable and lower total-cost-of-ownership solution compared to continuous cloud GPU usage.

Comparison of Key Components

| Component | Minimum Recommendation | Recommended for Performance |

|---|---|---|

| Processor Cores | 6 Cores (e.g., i5) | 10+ Cores (e.g., i5/i7 12th+ Gen) |

| Main Memory (RAM) | 16 GB DDR4/DDR5 | 32 GB - 64 GB |

| Storage (SSD) | 256 GB NVMe | 512 GB - 1 TB NVMe |

| OS for Development | Windows 11 Pro | Ubuntu Linux 24.04 LTS |

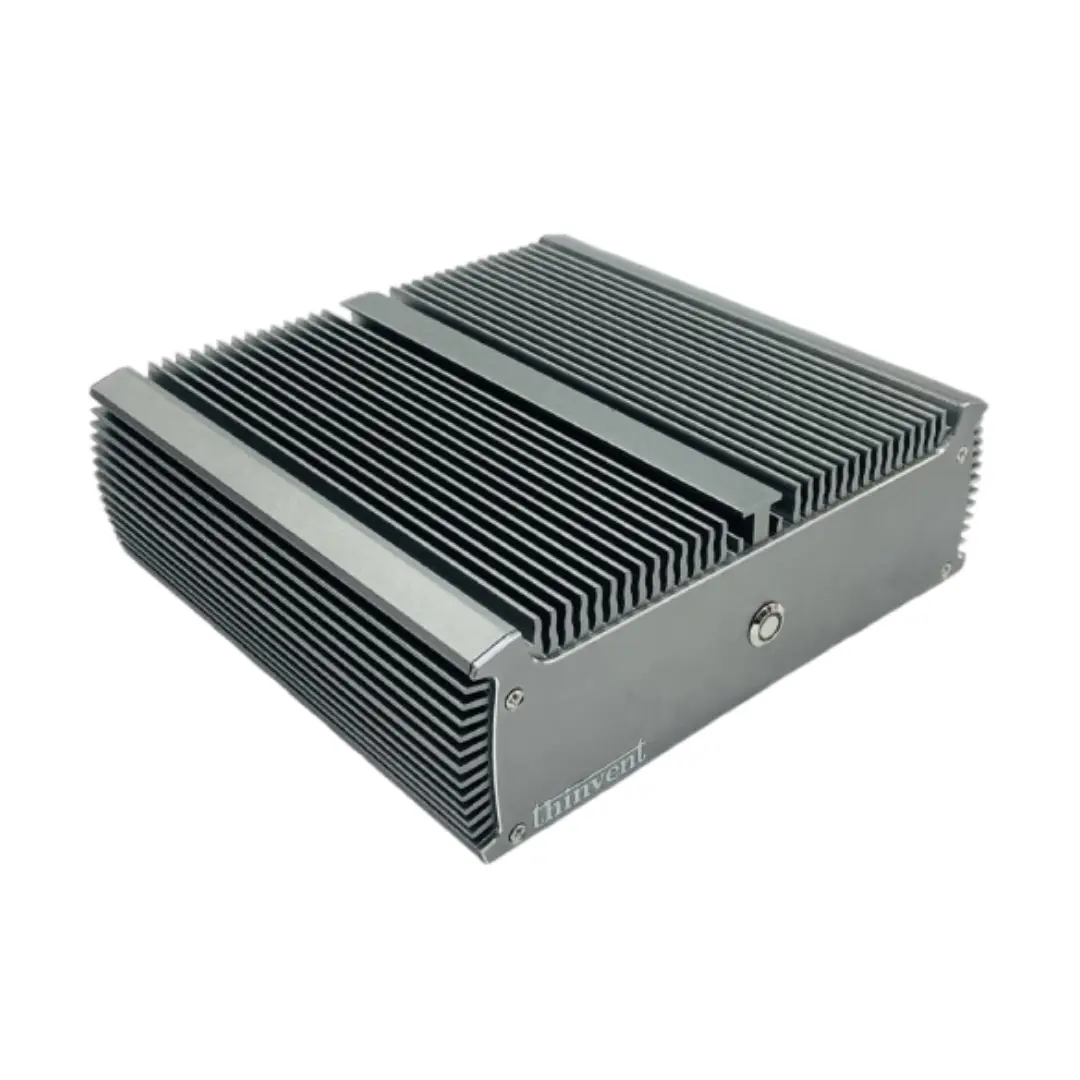

Thinvent Computers for LLM Workloads

Thinvent offers a range of industrial-grade computing solutions well-suited for local LLM deployment. Our systems prioritize reliability, sustained performance, and flexible configurations. For demanding LLM inference tasks, consider our high-core-count Mini PCs and Industrial PCs featuring Intel® Core™ i5 and i7 processors from the 12th, 13th, and 14th generations. These can be configured with up to 64GB of RAM and high-speed NVMe storage, providing the necessary computational foundation. Their fanless, rugged designs also make them ideal for deploying AI at the edge in challenging environments where stability is paramount.