Running Large Language Models (LLMs) locally requires a specific set of hardware capabilities to ensure smooth, responsive, and efficient performance. Unlike cloud-based inference, local deployment places the computational load directly on your PC, demanding a powerful processor, ample memory, and fast storage. The ideal system balances high core counts for parallel processing, fast clock speeds for single-threaded tasks, and sufficient RAM to load the model parameters entirely into memory, avoiding slowdowns from disk swapping.

Key Specifications for LLM PCs The primary hardware considerations are the CPU, RAM, and Storage.

-

Processor (CPU): Modern Intel Core i5 or i7 processors from the 12th generation onward are recommended. Look for high-performance P-cores (Performance cores) and a high maximum turbo frequency (ideally 4.0 GHz+). Core count is critical; 10 or more cores (like the i5-1240P with 12 cores or i5-1335U with 10 cores) provide excellent parallel processing for model inference and fine-tuning.

-

Main Memory (RAM): This is often the limiting factor. For running 7B-13B parameter models effectively, a minimum of 16GB RAM is required. For larger 30B+ models or for running alongside other applications, 32GB to 64GB of DDR4/DDR5 RAM is essential.

-

Storage: A fast NVMe SSD (512GB or 1TB) drastically reduces model load times and improves overall system responsiveness compared to traditional hard drives or eMMC storage.

Use Cases and Applications Local LLM deployment is ideal for developers, researchers, and businesses requiring data privacy, offline access, or predictable latency. Common applications include:

-

AI-Powered Development: Running code-generation models locally within an IDE.

-

Private Data Analysis: Processing sensitive documents or internal communications without sending data to external servers.

-

Content Creation & Research: Using LLMs for drafting, summarization, and research with guaranteed availability and no usage caps.

-

Embedded AI Solutions: Integrating LLM capabilities into kiosks, digital signage, or specialized industrial PCs for on-device intelligence.

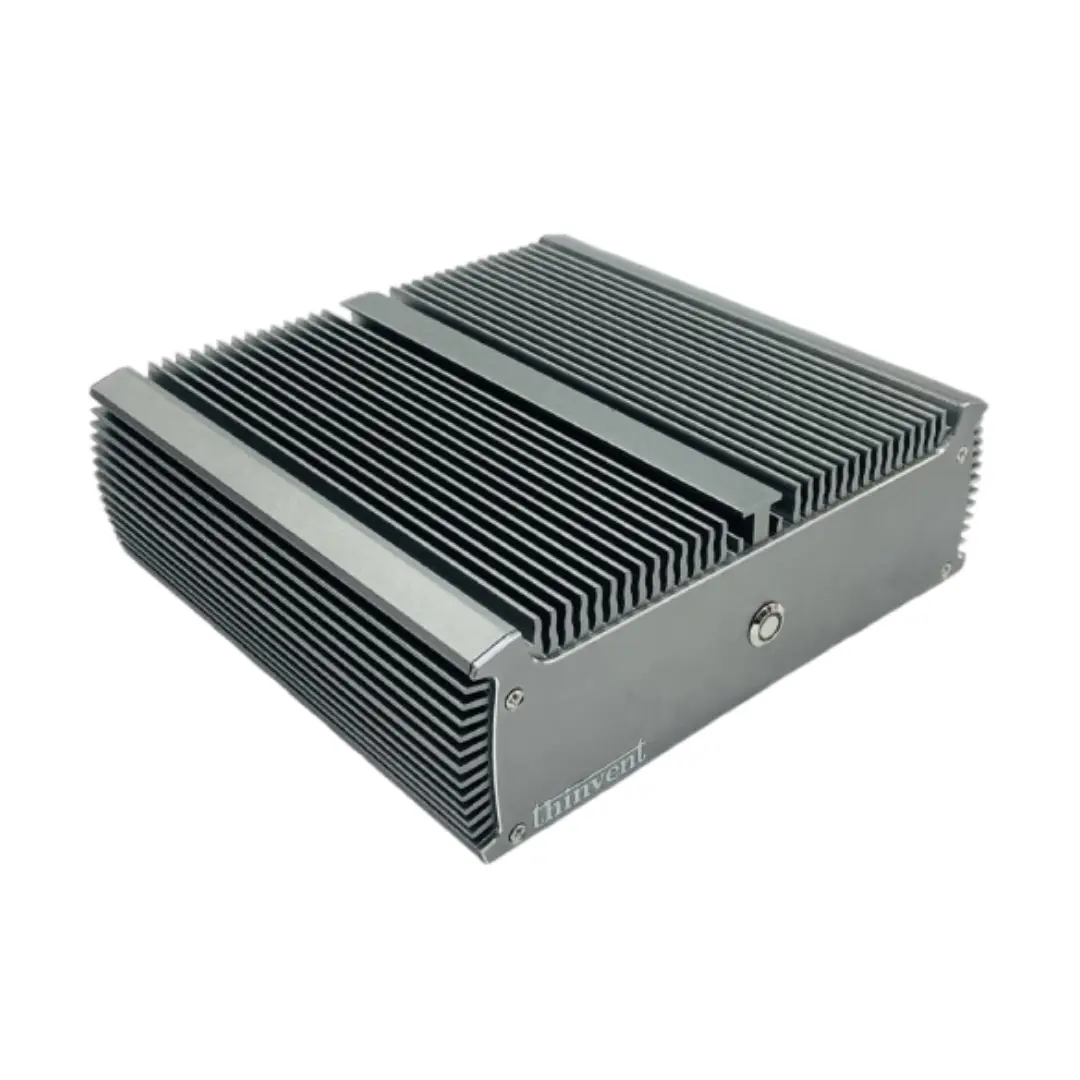

Thinvent PCs for Local LLM Deployment Thinvent's range of industrial and mini PCs is engineered for demanding computational workloads, making them well-suited for local AI and LLM tasks. Key product lines include:

-

Industrial PC (IPC) Series: Featuring processors like the Intel Core i5-1240P (12 cores) with support for up to 64GB RAM, these rugged systems offer sustained performance and reliability for continuous AI inference.

-

Aero Mini PC Series: Equipped with the latest Intel Core processors, such as the 14th Gen Core 5 120U (10 cores), these compact yet powerful units provide a high-performance footprint ideal for development and testing.

-

All-in-One PCs: Models like the Uno 23.8" with an Intel Core i5-1335U and configurable with up to 64GB RAM offer an integrated, space-saving solution for AI workstations.

These systems provide the necessary computational power, memory scalability, and robust cooling (often fanless or with advanced thermal designs) required for running LLMs locally, ensuring stable performance for prototyping and production AI applications.