What Makes a PC Ideal for Running Local LLMs?

Running a Large Language Model (LLM) locally requires a balanced system with a focus on processing power, memory capacity, and storage speed. Unlike cloud-based inference, local deployment demands hardware capable of handling intensive, sustained computational loads for tasks like text generation, code completion, or data analysis. The key is to avoid bottlenecks that slow down model loading and response times.

Key Specifications for Local LLM Performance

For effective local LLM operation, prioritize these components:

-

Processor (CPU): A modern multi-core processor is essential. While dedicated AI accelerators (NPUs/GPUs) offer the best performance, a powerful CPU with high core/thread counts (e.g., Intel Core i5/i7) can efficiently handle smaller models (7B-13B parameters) and quantized versions.

-

System Memory (RAM): This is often the primary constraint. The model weights must be loaded into RAM. A general guideline is 8-16GB for smaller 7B models, 16-32GB for 13B models, and 32GB+ for larger 70B models (especially when quantized). Faster DDR4/DDR5 RAM improves data throughput.

-

Storage (SSD): A fast NVMe SSD drastically reduces model load times from several minutes to seconds. Aim for at least 512GB of storage to accommodate the operating system, the LLM software (like Ollama or LM Studio), and multiple model files, which can be 4-40GB each.

-

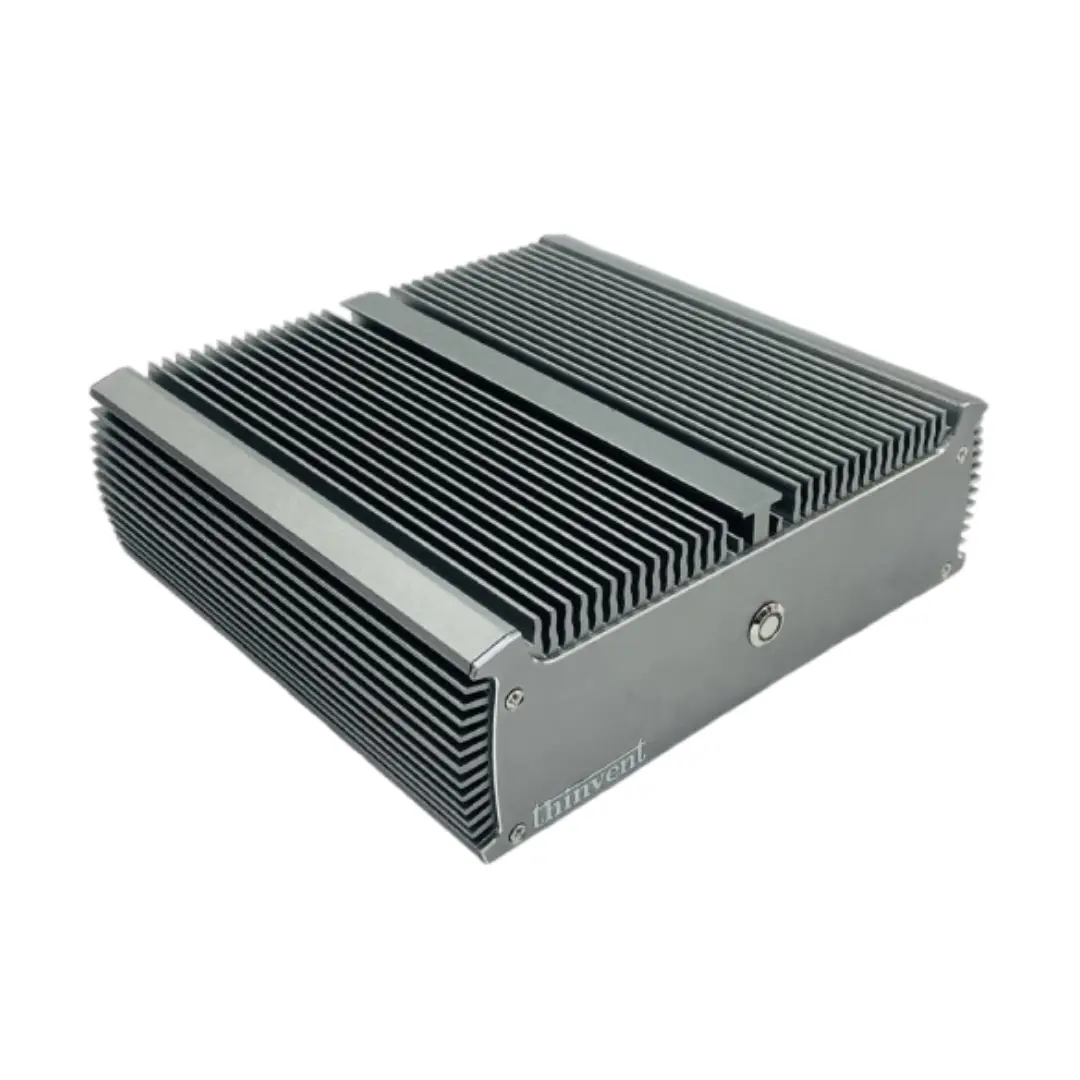

Form Factor & Cooling: Industrial and mini PCs with robust, fanless cooling are ideal for 24/7 operation, ensuring consistent performance without thermal throttling in embedded environments.

Use Cases and Applications

Local LLMs are deployed for privacy-sensitive, low-latency, or offline applications:

-

Secure Document Analysis: Processing confidential internal documents without external API calls.

-

Edge AI & IoT: Integrating conversational AI into kiosks, digital signage, or robotics.

-

Developer Workstations: For offline coding assistance, code explanation, and testing.

-

Research & Prototyping: Experimenting with model fine-tuning and custom pipelines in controlled settings.

Recommended System Tiers for Local LLMs

| Use Case / Model Size | Recommended CPU Series | Minimum RAM | Recommended SSD | Ideal Form Factor |

|---|---|---|---|---|

| Lightweight (e.g., 7B Params) | Intel N-Series, Core i3 | 16 GB | 256 GB NVMe | Mini PC, Thin Client |

| Balanced (e.g., 13B Params) | Intel Core i5, i7 | 32 GB | 512 GB NVMe | Industrial PC, Mini PC |

| Advanced (e.g., 70B Params - Quantized) | Intel Core i7, i9 | 64 GB+ | 1 TB+ NVMe | High-Performance Industrial PC |

Thinvent PCs for Local LLM Workloads

Thinvent's range of industrial computing solutions provides the reliable, high-performance foundation required for local AI inference. Our systems are engineered for sustained operation in diverse environments. For LLM tasks, we recommend exploring our Aero Mini PC series with configurable high-capacity RAM and fast NVMe storage, or our more powerful industrial PC lines featuring latest-generation Intel Core processors. These fanless, rugged computers ensure your AI applications run consistently without interruption, making them perfect for deploying private, on-premise language models.