A local AI computer is a compact, powerful computing device designed to run artificial intelligence and machine learning models directly on-site, without relying on cloud connectivity. This "edge AI" approach offers significant advantages in latency, data privacy, bandwidth efficiency, and operational reliability. These systems are engineered with specific hardware—such as capable multi-core CPUs, ample RAM, and fast storage—to handle the computational demands of model inference, data preprocessing, and real-time decision-making.

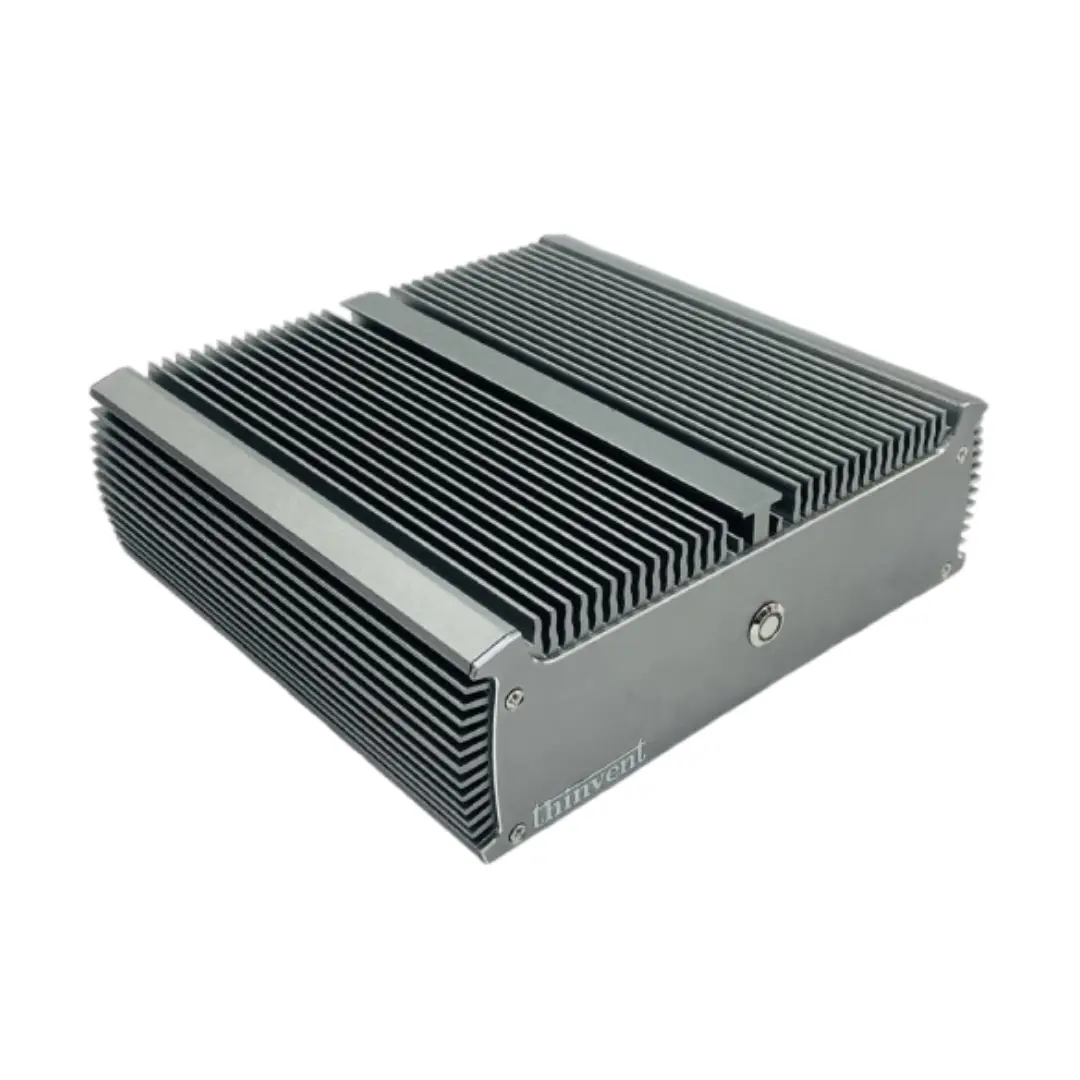

Key specifications for an effective local AI computer center on processing power and memory. A modern multi-core processor (Intel Core i3/i5 or equivalent) with high clock speeds is essential for parallel task execution. Sufficient RAM (typically 8GB or more, with 16GB+ being ideal for larger models) ensures smooth operation without bottlenecks. Fast SSD storage (256GB+) allows for quick loading of models and datasets. Many edge AI applications also benefit from fanless, rugged designs for deployment in industrial environments where dust, vibration, or wide temperature ranges are factors.

Primary Use Cases and Applications:

-

Industrial Automation: Real-time visual inspection, predictive maintenance, and robotic control on the factory floor.

-

Smart Retail & Surveillance: On-device people counting, object recognition, and anomaly detection, ensuring privacy and continuous operation.

-

Healthcare & Life Sciences: Localized medical imaging analysis and diagnostic support at the point of care.

-

IoT Gateways: Aggregating and processing data from multiple sensors, running inference before sending only relevant insights to the cloud.

-

Digital Signage & Kiosks: Dynamic, interactive content that adapts based on computer vision inputs.

Hardware Considerations for Local AI:

| Component | Recommendation | Rationale |

|---|---|---|

| Processor | Intel Core i3/i5 (12th Gen or newer), Intel N-series (N100/N150) | Balances performance per watt; newer gens offer better AI instruction sets. |

| Cores/Threads | 6 cores / 12 threads or more | Enables parallel processing of inference tasks and multiple data streams. |

| Memory (RAM) | 16GB DDR4/DDR5 (minimum 8GB) | Essential for loading and running medium to large AI models efficiently. |

| Storage | 256GB+ NVMe SSD | Fast read/write speeds for model loading and data logging. |

| Form Factor | Industrial PC, Fanless Mini PC | Provides durability and reliability for 24/7 operation in varied environments. |

Thinvent's Local AI Computing Solutions

Thinvent offers a robust portfolio of computing hardware engineered for local AI workloads at the edge. Our range includes fanless Industrial PCs built for harsh environments, compact Mini PCs for space-constrained deployments, and versatile All-in-One systems with integrated displays. Key models feature the latest Intel Core and N-series processors, configurable with up to 64GB of RAM and high-speed NVMe storage, providing the necessary foundation for demanding inference tasks. Whether your application involves computer vision, sensor analytics, or autonomous operations, Thinvent's reliable, scalable solutions are designed to bring intelligent processing directly to your data source.