A GPU server is a specialized computing system designed to leverage the parallel processing power of multiple Graphics Processing Units (GPUs) for tasks beyond traditional graphics rendering. Unlike standard servers that rely primarily on CPUs, GPU servers are engineered to handle massive datasets and complex mathematical computations simultaneously. This makes them indispensable for high-performance computing (HPC) workloads where raw computational throughput is critical. These systems typically feature robust power supplies, advanced cooling solutions, and high-bandwidth interconnects to support multiple high-end GPUs.

Key specifications for a capable GPU server include powerful multi-core CPUs (to manage the GPUs), substantial RAM (often 64GB or more), high-speed NVMe storage, and, most importantly, multiple PCIe slots to accommodate several discrete GPUs. The choice of GPU—such as NVIDIA's A100, H100, or RTX series, or AMD's Instinct series—depends on the specific application, balancing factors like tensor cores for AI, VRAM capacity for large models, and double-precision performance for scientific simulations. Support for technologies like NVIDIA NVLink for GPU-to-GPU communication is also a crucial consideration for scaling performance.

The primary use cases for GPU servers span several demanding fields. In Artificial Intelligence and Machine Learning, they accelerate the training of deep neural networks. For Scientific Research and Simulation, they enable complex modeling in fields like computational fluid dynamics, genomics, and climate forecasting. In Media and Entertainment, they power real-time rendering, video encoding, and visual effects. Additionally, they are critical for Financial Modeling for rapid risk analysis and algorithmic trading, and for Healthcare in areas like medical imaging and drug discovery.

| Consideration | CPU-Centric Server | GPU Server (HPC) |

|---|---|---|

| Core Architecture | Fewer, complex cores optimized for serial tasks. | Thousands of smaller, efficient cores for parallel processing. |

| Ideal Workload | General-purpose computing, databases, web serving. | Parallelizable tasks: AI/ML training, scientific computing, rendering. |

| Performance Metric | High single-threaded performance (IPC). | High floating-point operations per second (FLOPS). |

| Power & Cooling | Standard requirements. | High power draw and requires advanced thermal management. |

| Primary Cost Driver | CPU, memory, and storage. | High-end GPUs and supporting infrastructure. |

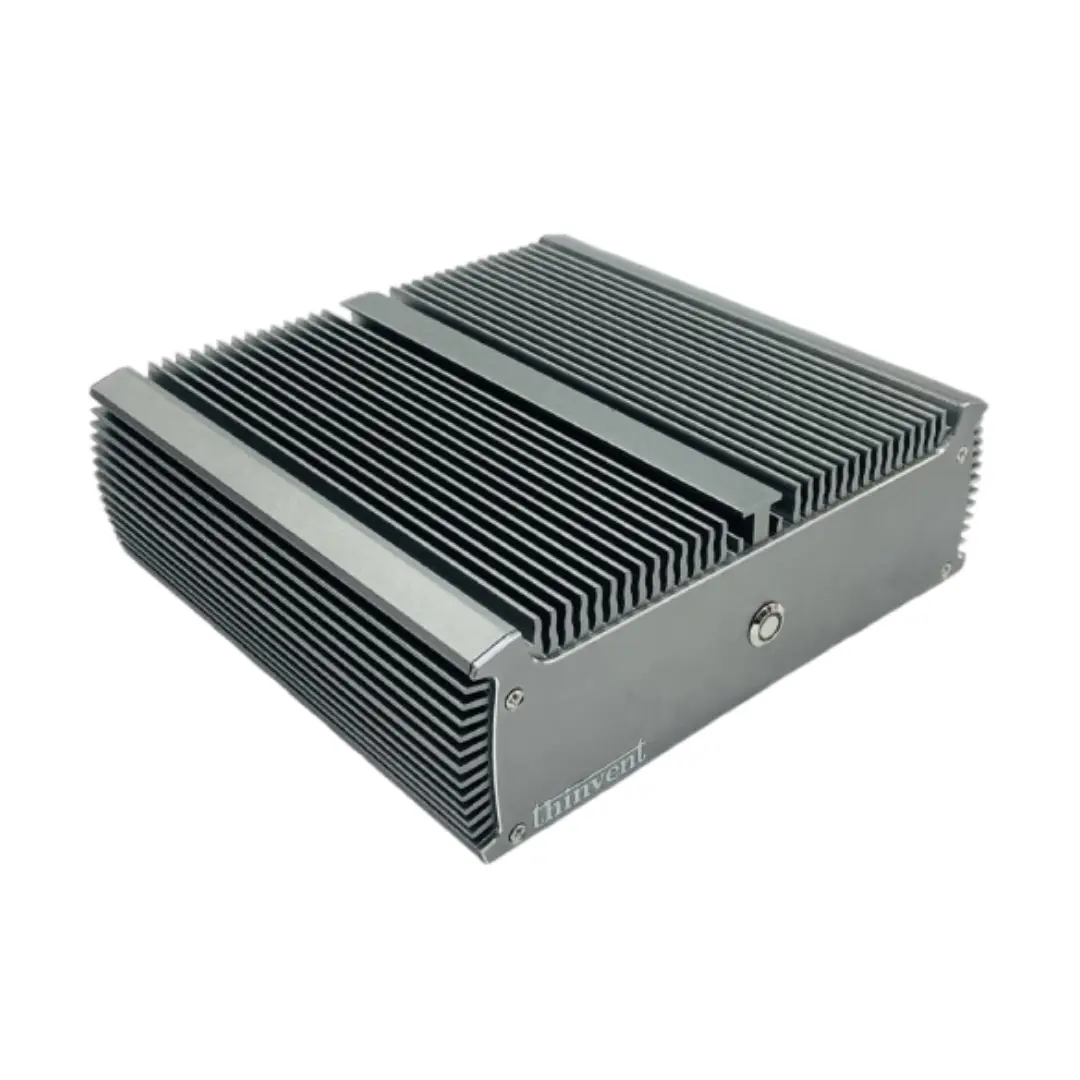

Thinvent's High-Performance Computing Solutions

While Thinvent specializes in robust, fanless industrial computers and compact mini PCs for edge computing and embedded applications, our high-end Industrial PC (IPC) lines form a solid foundation for building dedicated GPU server solutions. For customers requiring significant computational power, our chassis with support for full-size expansion cards can be configured with powerful Intel Core i5 and i7 processors, ample DDR4/DDR5 RAM (configurable up to 64GB), and high-speed NVMe storage. These systems provide the reliable, stable platform necessary to integrate professional-grade GPUs for specialized HPC, AI inference at the edge, or intensive design workstations. Contact our solutions team to discuss custom configurations that meet your specific high-performance computing needs.