Running Large Language Models (LLMs) locally requires a careful balance of computational power, memory, and storage. Unlike cloud-based inference, local deployment demands hardware capable of handling intensive, parallel processing tasks and managing large model weights efficiently. The primary hardware considerations are a powerful multi-core CPU, substantial RAM, fast storage, and robust cooling for sustained workloads.

Key Hardware Specifications for Local LLM For effective local LLM operation, focus on these core components:

-

Processor (CPU): A modern, high-core-count CPU is essential. Intel Core i5/i7 processors from the 12th generation or newer, featuring Performance (P) and Efficiency (E) cores, are ideal. Look for models with 10 or more cores and high turbo frequencies (e.g., 4.4 GHz+) to accelerate model loading and inference tasks.

-

Main Memory (RAM): System RAM is critical for loading model parameters. For running 7B parameter models smoothly, 16GB is the absolute minimum. For 13B+ models or multi-model workflows, 32GB to 64GB of DDR4/DDR5 RAM is recommended to prevent bottlenecks.

-

Storage (SSD): A fast NVMe SSD (512GB or larger) drastically reduces model load times and improves overall system responsiveness compared to traditional hard drives or eMMC storage.

-

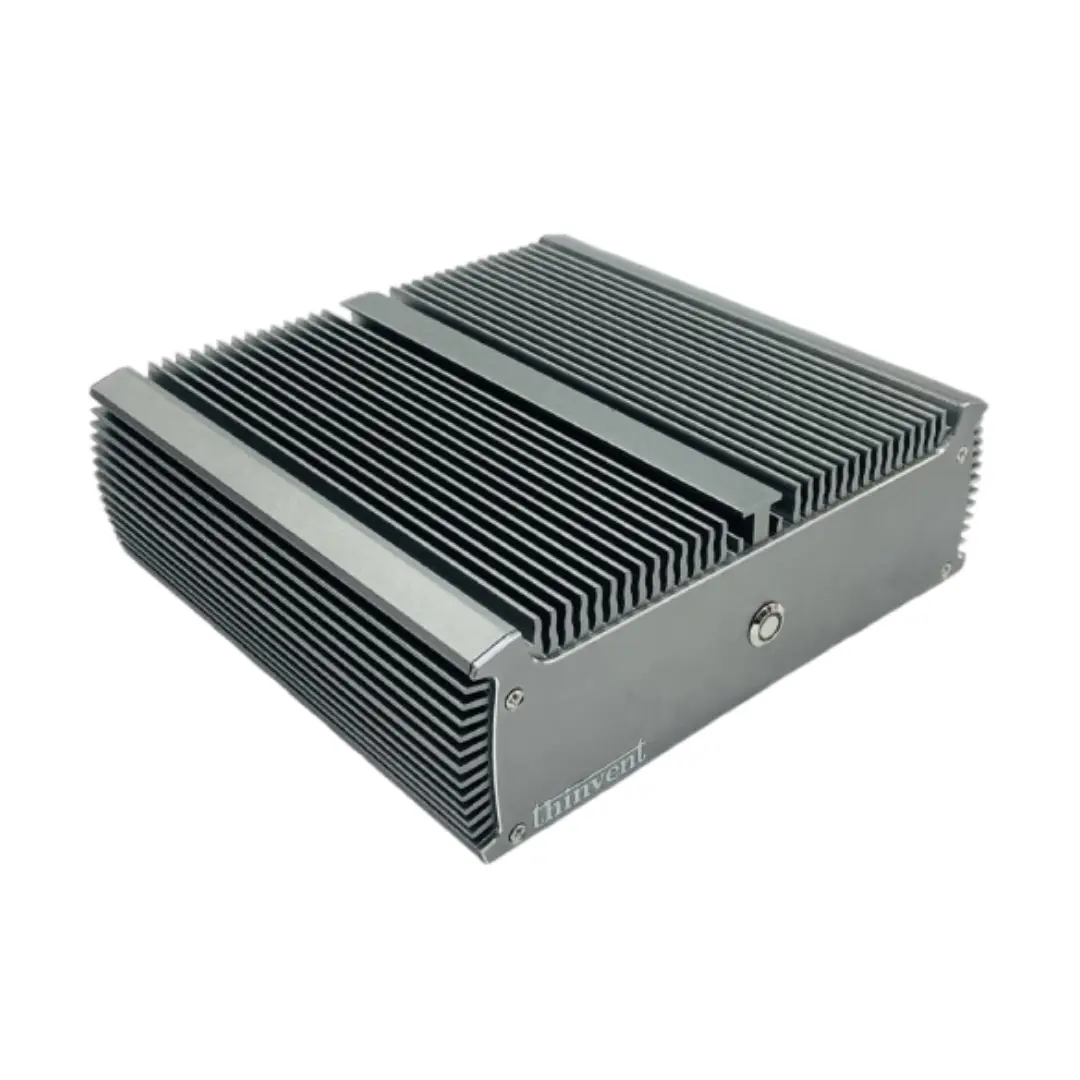

Form Factor & Cooling: Industrial PCs and high-performance Mini PCs with robust, often fanless, cooling solutions are preferable. They ensure stable performance during extended inference sessions without thermal throttling.

Use Cases and Applications Local LLM hardware is perfect for scenarios requiring data privacy, low-latency responses, or offline operation. This includes:

-

Research & Development: Prototyping and fine-tuning models in a secure, controlled environment.

-

Private Data Analysis: Processing sensitive corporate or personal documents without sending data to external servers.

-

Edge AI Deployments: Integrating LLM capabilities into kiosks, digital signage, or specialized industrial equipment where internet connectivity is unreliable or undesired.

Thinvent Products for Local LLM Workloads Thinvent's industrial and high-performance mini PCs are engineered for demanding computing tasks like local AI inference. Key product lines that meet these requirements include:

-

Industrial PC (IPC) Series: Featuring processors like the Intel Core i5-1240P (12 cores) with support for up to 64GB RAM, these systems offer the expandability and durability for continuous operation.

-

Aero Mini PC Series: Equipped with the latest Intel Core Ultra processors (e.g., Core 5 120U, 10 cores) and up to 16GB RAM, these compact units deliver desktop-level performance in a small footprint.

-

All-in-One PCs: Models like the Uno 23.8" with an Intel Core i5-1335U and configurable with up to 64GB RAM provide an integrated, space-saving solution for AI-powered workstations.

These systems prioritize reliable performance, extensive connectivity, and flexible operating system support (Windows 11 Pro, Ubuntu Linux), making them a solid foundation for building a local LLM platform.