What Makes a PC Suitable for Local LLMs?

Running Large Language Models (LLMs) locally requires a specific hardware configuration focused on processing power, memory, and storage. The primary goal is to handle the intensive computational and memory demands of AI inference without relying on cloud services. A suitable PC must have a modern, multi-core processor, substantial RAM, and fast storage to load and run model parameters efficiently.

Key Specifications for Local LLM PCs

The most critical components are the CPU, system memory (RAM), and storage. For effective local LLM operation, especially with models of 7B parameters or more, the following specifications are recommended:

-

Processor: A modern, multi-core CPU is essential. While dedicated AI accelerators (NPUs/GPUs) offer the best performance, powerful Intel Core i5 or i7 processors from the 12th generation onward provide strong CPU-based inference capabilities through their integrated graphics and AI instructions.

-

Main Memory (RAM): System RAM is crucial as it holds the model weights during operation. For smaller models (like 7B parameter variants), 16GB is a practical minimum. For larger or more complex models, 32GB or 64GB of RAM is highly recommended to ensure smooth performance.

-

Storage (SSD): A fast Solid State Drive (SSD) drastically reduces model load times. A 512GB or 1TB NVMe SSD is ideal for storing the operating system, applications, and multiple LLM model files, which can be several gigabytes each.

Use Cases and Applications

Deploying LLMs locally on a dedicated PC is ideal for scenarios requiring data privacy, low-latency responses, or operation in disconnected environments. Common applications include:

-

Private AI Assistants: For businesses handling sensitive internal documents, financial data, or proprietary research.

-

Development & Research: AI developers and researchers can experiment with, fine-tune, and test models without incurring cloud computing costs.

-

Edge AI Solutions: Integrating conversational AI into kiosks, digital signage, or industrial control systems where constant internet connectivity is not guaranteed.

Recommended System Comparison

| Use Case | Recommended Processor | Minimum RAM | Recommended SSD | Key Consideration |

|---|---|---|---|---|

| Lightweight/Experimental | Intel Core i3 / N-series | 16 GB | 256 GB | Suitable for smaller models (e.g., 3B-7B parameters). |

| General Purpose & Development | Intel Core i5 (12th Gen+) | 32 GB | 512 GB | Balances cost and performance for 7B-13B parameter models. |

| Advanced/Production Use | Intel Core i7/i5 (13th/14th Gen) | 64 GB | 1024 GB | Handles larger models (13B+), multi-model workloads, and fine-tuning. |

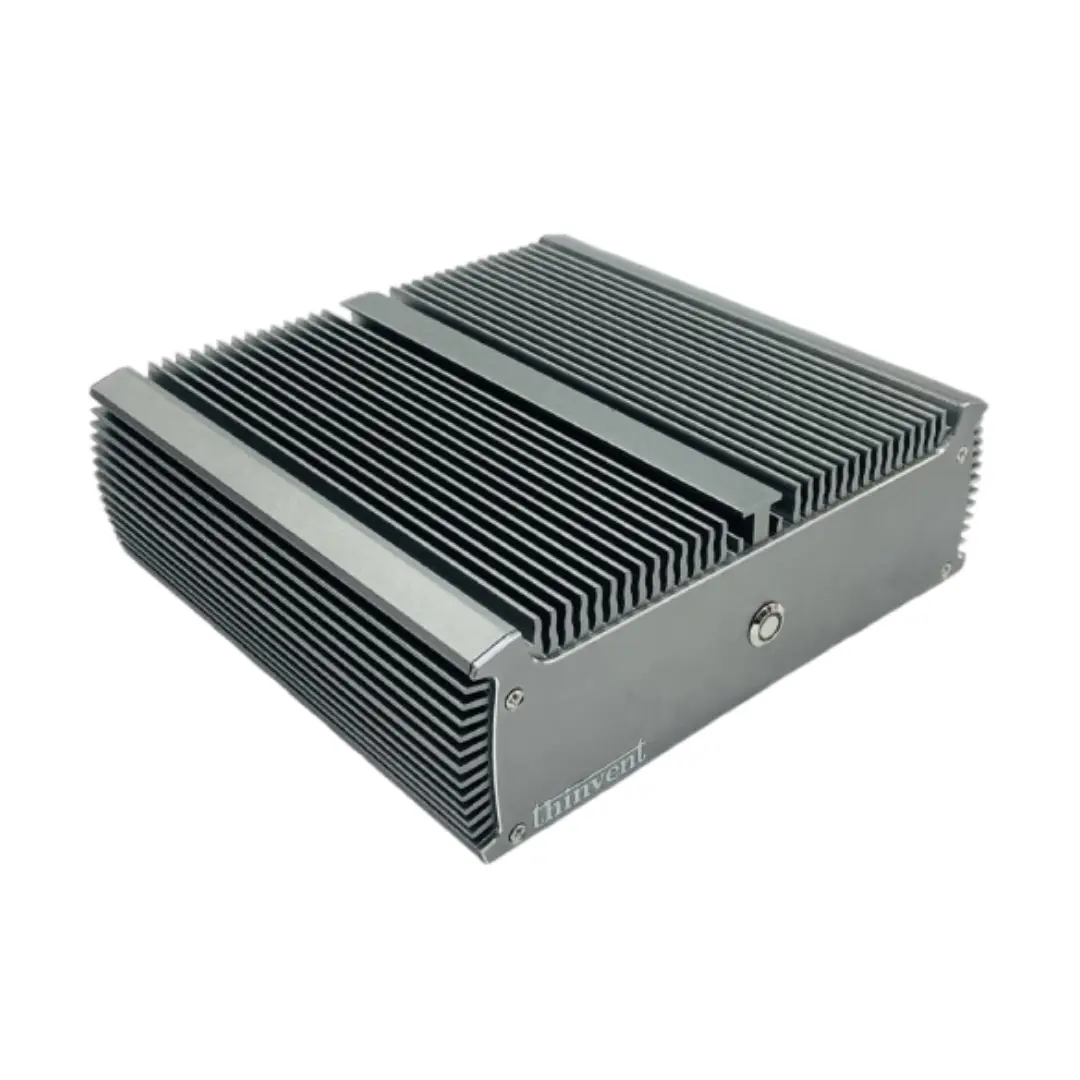

Thinvent PCs for Local LLM Workloads

Thinvent offers a range of industrial-grade computing solutions that provide the reliability and performance needed for local AI deployment. Our compact and fanless industrial PCs are engineered for 24/7 operation in diverse environments, making them perfect for embedding AI at the edge. For demanding LLM tasks, we recommend exploring our systems equipped with high-core-count Intel Core i5 and i7 processors, configurable with up to 64GB of DDR4/DDR5 RAM and high-speed NVMe SSD storage. This robust hardware foundation, combined with our support for various operating systems including Windows 11 and Linux, provides a stable and powerful platform for your local AI initiatives.