A GPU server is a specialized computing system equipped with powerful Graphics Processing Units (GPUs) designed for parallel processing. Unlike standard servers with CPUs, these machines excel at handling computationally intensive workloads by distributing tasks across thousands of GPU cores. They are essential for modern data centers, research facilities, and development environments.

Key Specifications and Technical Details

A robust GPU server is defined by its core components. It typically features multiple high-end GPUs from manufacturers like NVIDIA or AMD, supported by a high-core-count CPU (such as Intel Xeon or AMD EPYC) to manage data flow. Critical specifications include:

-

GPU Configuration: Support for 4, 8, or more professional-grade GPUs (e.g., NVIDIA A100, H100, L40S).

-

System Memory: Large capacity RAM (128GB to 1TB+ of ECC memory) to feed data to the GPUs.

-

Storage: High-speed NVMe SSDs in RAID configurations for rapid data access.

-

Networking: High-bandwidth interfaces like 10/25/100 Gigabit Ethernet or InfiniBand for cluster communication.

-

Power & Cooling: Redundant high-wattage PSUs and advanced cooling systems to handle significant thermal output.

Primary Use Cases and Applications

GPU servers are the backbone of several advanced technological fields:

-

Artificial Intelligence & Machine Learning: Training complex neural networks and running deep learning inference.

-

High-Performance Computing (HPC): Scientific simulations, financial modeling, and genomic research.

-

Graphics Rendering & VFX: Rendering 3D animation, visual effects, and architectural visualizations.

-

Data Analytics & Big Data: Accelerating data processing and real-time analytics pipelines.

GPU Server vs. High-End Workstation

While both can house powerful GPUs, they serve different scales of operation.

| Feature | GPU Server | High-End Workstation |

|---|---|---|

| Primary Role | Data center / rack-mounted compute node | Individual user desktop for design/engineering |

| Form Factor | Rackmount (1U, 2U, 4U) | Tower or small form factor |

| GPU Support | Multiple GPUs (4-10+), often with NVLink | Typically 1-4 GPUs |

| Remote Management | Essential (IPMI, iDRAC, Redfish) | Optional or not available |

| Scalability | Designed for clustering and expansion | Limited to single-system performance |

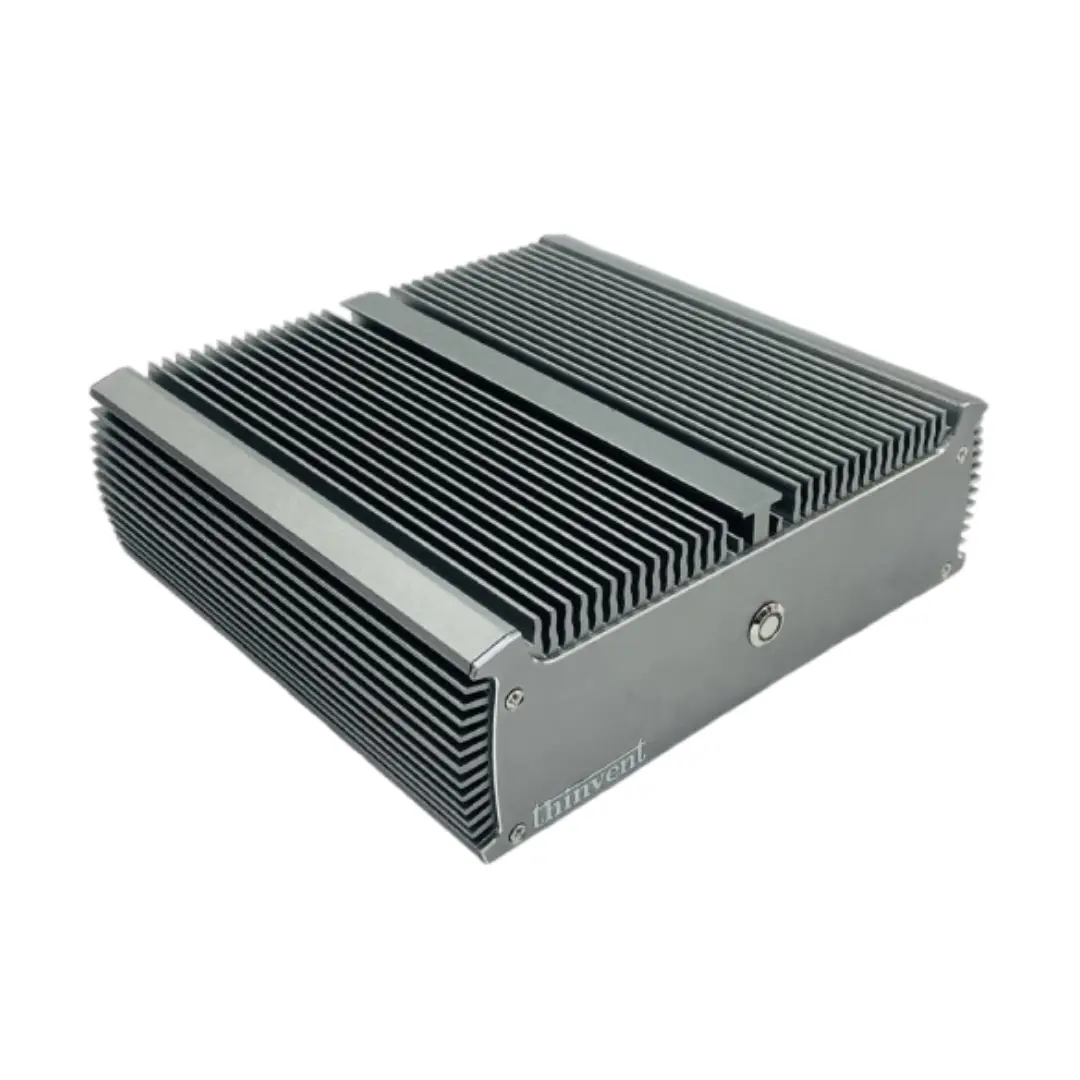

Thinvent GPU Server Solutions

While Thinvent specializes in robust, fanless industrial computers and mini PCs for edge computing, our engineering expertise in reliable, performance-oriented hardware translates to understanding the demanding requirements of compute infrastructure. For GPU-accelerated tasks at the edge or in constrained environments, our high-performance industrial PCs with powerful integrated graphics or expansion capabilities can serve as complementary nodes or inference engines in a larger distributed system. We focus on delivering reliable, mission-critical computing hardware built for 24/7 operation.