What is a PC for LLM Training?

A PC for LLM (Large Language Model) training is a specialized computer system engineered to handle the immense computational demands of developing and fine-tuning AI models. Unlike standard desktop PCs, these systems require powerful multi-core processors, substantial amounts of high-speed RAM, and fast, high-capacity storage to manage the billions of parameters and massive datasets involved in the training process. They serve as cost-effective, dedicated nodes for research, development, and smaller-scale training tasks.

Key Specifications for LLM Training PCs

For effective LLM training, a PC must prioritize raw processing power and memory bandwidth. The most critical components are:

-

High-Core-Count Processors: Modern Intel Core i5, i7, and i9 processors (12th Gen and newer) with Performance-cores (P-cores) are essential. A high core count (e.g., 10, 12, or more) allows for parallel processing of training tasks.

-

Abundant System Memory (RAM): Training even modest models requires significant RAM to hold the model parameters and training data in memory. Configurations start at 32GB, with 64GB or more being recommended for serious work.

-

Fast, High-Capacity Storage: A large NVMe SSD (512GB or 1TB+) is crucial for rapidly loading training datasets and checkpointing model states during long training runs.

-

Robust Cooling: Sustained 100% CPU utilization during training generates considerable heat. A reliable, high-performance cooling solution is mandatory to prevent thermal throttling and ensure stability.

Use Cases and Applications

LLM training PCs are used in various professional and research settings:

-

AI Research & Development: Academic institutions and corporate R&D labs use them for prototyping new model architectures and algorithms.

-

Model Fine-Tuning: Businesses can customize pre-trained foundational models (like Llama or Mistral) for specific domains such as legal, medical, or customer service using their proprietary data.

-

Edge AI Development: Developing and testing smaller, efficient models intended for deployment on edge devices or in resource-constrained environments.

Comparing PC Configurations for AI Workloads

| Use Case | Recommended Processor | Minimum RAM | Recommended Storage | Notes |

|---|---|---|---|---|

| Lightweight Fine-Tuning | Intel Core i5 (12th/13th Gen) | 32 GB | 512 GB SSD | Suitable for small datasets and parameter-efficient tuning methods. |

| Medium-Scale Training | Intel Core i7/i9 (13th/14th Gen) | 64 GB | 1 TB NVMe SSD | Handles larger models and datasets for more comprehensive projects. |

| Research & Prototyping | Intel Core i9 (14th Gen) | 64 GB+ | 1 TB+ NVMe SSD | Provides headroom for experimenting with different model sizes and techniques. |

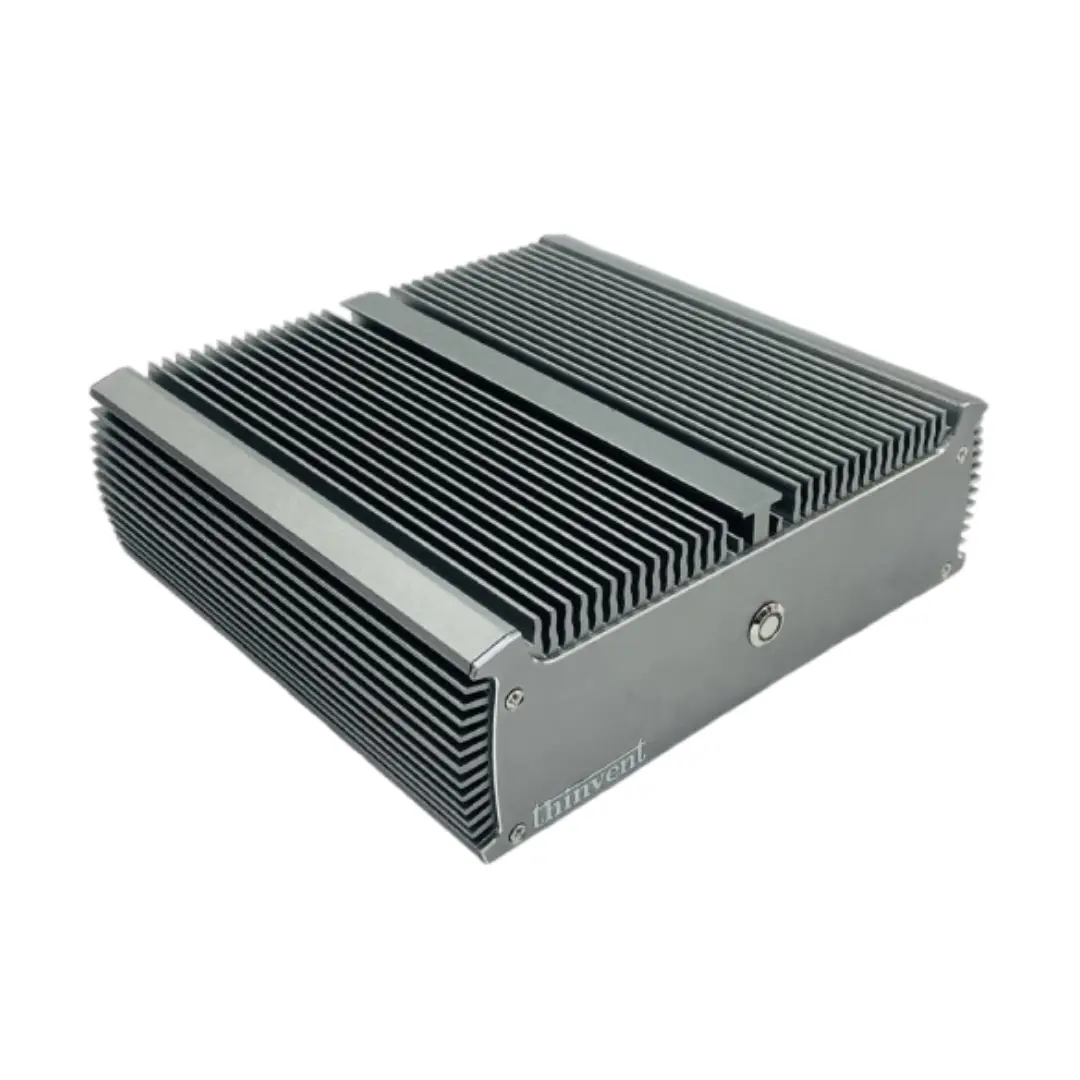

Thinvent Industrial PCs for AI Development

Thinvent offers a range of robust, fanless industrial PCs that are ideal for the demanding, continuous operation required for LLM training. Our systems feature the latest Intel Core processors, support for high-capacity DDR4/DDR5 memory, and multiple high-speed NVMe SSD slots. Built with industrial-grade components and passive cooling, they ensure unparalleled reliability and silence, making them perfect for lab environments, digital signage AI integration, and dedicated AI inference/training nodes. Explore our configurable Industrial PC and high-performance Mini PC lines to build a system tailored to your specific AI workload requirements.